Because simple is enemy to fun

I have been working since a few years now. After leaving academia, I did not expect to be able to retain control over my work-provided machine for so long. In all these years, I changed a number of employers and every time I was given a laptop to work with. Thanks to the size of the companies I was employed by at the beginning of my career, no strict IT policy was in place and I was able to install my favorite GNU/Linux1 flavor all the times (Archlinux, BTW). Then, some years ago, I made the jump inside a slightly bigger company (a startup that was transitioning into a corporation) and I hit for the first time the wall of IT bureaucracy. I was required to install an EDR2 software on the company laptop. My drama was that I was also using said laptop as my personal one (I know, bad idea).

After changing company, and realizing that this was going to be the industry standard, I decided to buy a personal laptop, removing all my stuff from the work machine.

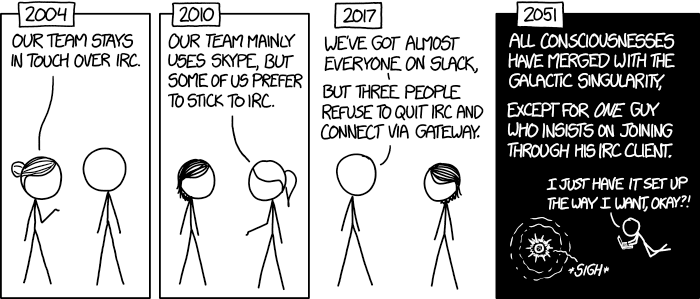

This might make the end of the story: and they lived happily ever after. Not so fast. I have come to like most of my job-related routine, but from time to time I want to also slack at my own pace, like chatting with my friends over IRC or hacking something I don’t necessary feel comfortable my employer knowing about3.

One possible solution could be to keep the two laptops turned on and open side by side, and switch between them. But I am lazy and I have not enough space on my desk. I want to use just one screen and the same keyboard and mouse to interact with both laptops.

First iteration

My first iteration was to do remote desktop on my work laptop from my personal laptop.

This was not ideal: the keyboard capture logic depends heavily on the remote desktop technology and program (VNC vs RDP, different clients implementing them in slightly different ways). Moreover, when I was focused away from the work remote desktop session, I was often missing the notifications from that environment.

I think I resisted several months with this solution, but then I relized I wanted a better setup.

Waypipe

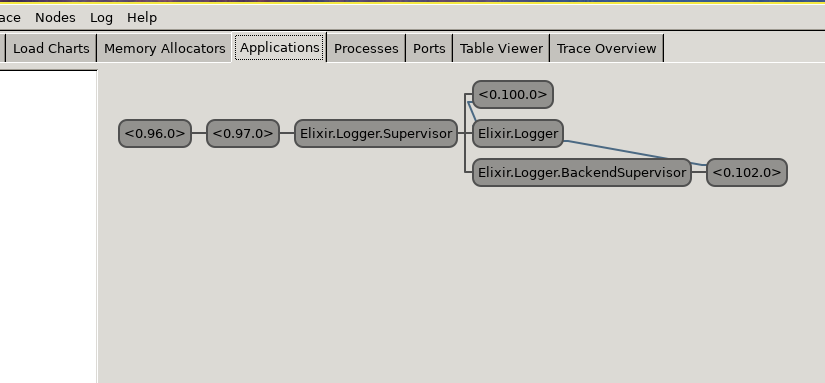

I really wanted to have just something executing in the context of the work laptop, but displayed on my personal laptop desktop environment. Thanks to my FoMO4, I left X11 back a long time ago and I am fully on Wayland-based window managers since some years now (sway and more recently niri). This has led me through quite some pain any time I needed to do screen sharing, but has graced me with a very lovely piece of software: waypipe.

If you ever tried ssh -X, it does a similar thing: it proxies wayland applications from the remote machine to the local one through ssh. Because it is wayland-aware, it allows to forward single wayland applications from one desktop to the other and makes the remote one properly react under events from the local desktop (for example, resizing works as expected and spawning another remote application from inside one that is already running works too).

Assuming that the window manager is already running on the remote machine, I can simply5

# in one terminal

waypipe ssh user@worklaptop slack

# in another terminal

waypipe ssh user@worklaptop chromiumThe rest of my workflow is mostly terminal based, so I just ssh into the machine and create a tmux session.

Notifications

There is just one piece missing here: I want to receive on my personal laptop the notifications from the work laptop, together with my local ones.

I did not find any pre-packaged solution and I came up with two projects that address most of my needs:

scapegoat: a set of programs to securely forward notifications from one Linux machine to another.notilog: a set of programs to store notifications and access their history.

Both interact with dbus through the org.freedesktop.Notifications interface, in different ways.

scapegoat

Notifications in most Linux desktop environments are managed by and delivered through dbus, a bespoke (and quite complex) IPC6 solution.

To handle (i.e. display and react to) notifications, a program must implement the org.freedesktop.Notifications interface and register as such (but only one implementor at a time is allowed to be registered). In my desktop setup, I generally use mako as notification program. The idea with scapegoat is to replace such program, registering as notification handler on the work laptop, and then forward via network all the notifications to another program running on the personal machine via network. This program will deliver the notification, adequately mangled, to the local notification handler (again, mako in my case).

I called the side on the remote (work) laptop scapegoat-source and the one on the local (personal) laptop scapegoat-sink.

Originally, they spoke through a unix socket forwarded through ssh, but this proved unreliable. I then resorted to a gRPC implementation, to leverage bidirectional communication for notification acknowledgement and mutual authentication through mTLS7.

notilog

Even now that I am able to forward notifications from one laptop to the other, I can still miss notifications. The amount of sources of notifications I have is too big, and if it happens I miss one I want to be able to consult a history of them to make it easier for me to switch to the right application, instead of searching through many.

Enter, notilogctl and notilogd. The latter is a daemon that snoops on the local flow of notifications, without blocking them, and logs them either in memory or in a sqlite database. The former is a simple cli to access the history, through the daemon. It offers basic filtering capabilities and pretty printing.

Snooping without intercepting is handy because notilogd does not need to register itself as a org.freedesktop.Notifications implementor, and I can have mako running at the same time. It does so using a debug mechanism offered by dbus.

Swan song

Unfortunately, this will soon come to a halt. The new company I am going to work for mandates mac laptops. A Linux VM might be an option, but I still have to investigate if it is possible.

I expect to be forced back (I had a mac very many years ago) into that glossed world of polished UIs, in a very good looking glass prison. I already begun investigating if there is any mechanism to port scapegoat to macos, but it seems the OS actively prevents handing notifications as they are considered privileged information. The philosophy is clear: the user is a consumer, the ecosystem is curated (i.e. controlled) by apple and is the opposite of open.

- RMS, please forgive my lazyness: I will write just Linux in the rest of this post. ↩︎

- Endpoint Detection and Response (wikipedia): software with kernel-level privileged access, whose aim is to monitor and control the employee machine. The reason for these class of software being required in most medium to big size companies is double: compliance with requirements from shareholders/third parties; to be able to adequately back the company claims, should it sue the employee for some reason. ↩︎

- A kernel-level snooper on a laptop is a good reason to be worried. ↩︎

- Fear of Missing Out: you might already know this, if not I saved you looking for it on the internet. ↩︎

- My full solution leverages systemd user units and a target to spawn all the services I need at once, but the gist of it is in those two commands. ↩︎

- Inter-Process Communication. ↩︎

- Setting up a pair of certificates such that the two ends are mutually authenticated is not easy. I did create a specific binary in the scapegoat project, called

scapegoat-certs, to make the process easier. ↩︎

(from

(from